CI/CD for applications

CI/CD is at its core less about technology and pipelines, and more about reducing the time it takes to go from idea to working software in production. Our setup lays the groundwork for this by simplifying, hardening and standardizing application deployment processes, and adhering to well-established industry practices.

A key ingredient is a simplified deployment pipeline - implemented as a single GitHub Actions workflow that runs in your application repository.

The CI/CD setup has standardized on two types of environments: dev (for non-production) and prod (for production). If your environments are called something else internally, you'll still use dev and prod when configuring CI/CD.

The deployment pipeline

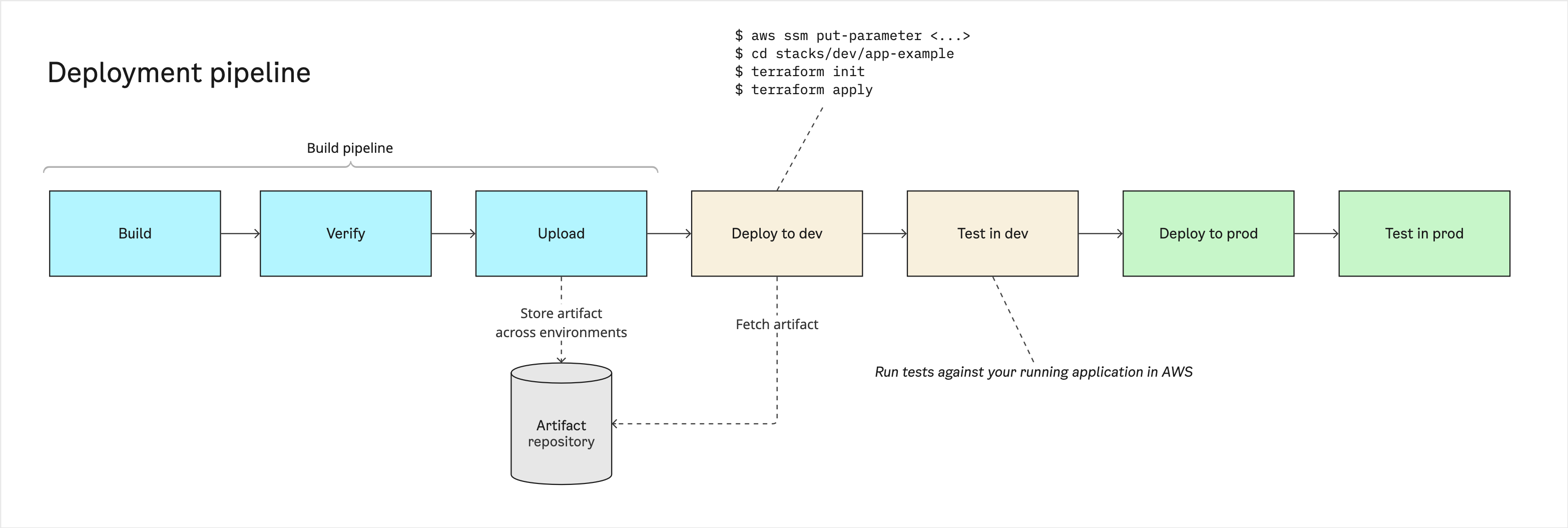

A deployment pipeline is the automated chain that builds, verifies, and deploys your application.

The goal of the deployment pipeline is to ensure that software is always in a releasable state. The pipeline should be the arbiter of releasability, not a human - every check that determines whether a change is fit for production should be incorporated into the pipeline itself, not handled through manual steps, tribal knowledge or ad-hoc scripts. Getting to that point is an iterative process, but it should be the direction to move in.

The pipeline runs on every push to every branch in your repository. Each run goes through the same stages - build, verify, upload (the build pipeline), with additional steps on the default branch to safely promote the artifact across environments. Running on every push gives fast, accurate and useful feedback: pull requests build and test the same way the default branch does, so broken changes show up in CI before they're merged. Deployment steps only fire on the default branch, which means that main is your source of truth of what's deployed or about to be.

Artifact strategy

An artifact is the packaged output of a build - a Docker image, a Lambda zip, a built static site, etc.

Build once, deploy many

The deployment pipeline is built around the concept of "build once, deploy many". This is a well-established practice: a pipeline run produces one immutable artifact, and the exact same artifact is deployed to all environments. Avoiding environment-specific builds keeps environments from drifting apart and prevents an artifact built for development from accidentally ending up in production.

Environment-specific values - database URLs, feature flags, API endpoints - are supplied to the artifact at runtime (typically through environment variables). This is what makes the same artifact work across environments.

Static websites

The browser has no runtime environment to read from, so a simple, pragmatic and effective approach for static websites is to bundle all environment configurations into the build itself and have the application pick the right one at runtime based on the URL it's served from - see pirates-app-zebra for a minimal reference implementation of this.

Naming convention

Each run of the deployment pipeline produces an artifact with a standardized, unique name. The generate-tag composite action produces tags for your artifacts using the following convention:

This makes it easy to identify which application an artifact belongs to and trace it back to the run that produced it.

Immutability

To improve reproducibility, once an artifact has been uploaded, it is immutable - it cannot be overwritten or replaced.

Deployment through Terraform

An application and its infrastructure are tightly interconnected. To ensure that they stay synchronized, the pipeline deploys your application using the same terraform apply that manages its infrastructure:

- The pipeline checks out the infrastructure code associated with a given application (e.g.,

stacks/dev/app-example). - The pipeline stores a reference to the new artifact in AWS Systems Manager Parameter Store and runs

terraform apply. - Terraform picks up the new artifact and updates the application runtime - that is, the image in an ECS task definition, the version of a Lambda function or a new static website bundle that should be uploaded to S3.

Why through Terraform?

Using one tool for both application and infrastructure changes keeps the deployment model simple. Terraform becomes the single source of truth, and the same exact mechanism is used across application types (containers, Lambda, static sites), types of change (application or infrastructure) and contexts (local or pipeline).

The alternative - separate deployment paths per app type (e.g., calling aws ecs update-service from a script) - adds moving parts and lets two different sources define what's actually deployed.

Manual approval

In continuous delivery, software is always ready to release; in continuous deployment, every change that passes the pipeline is released automatically. The line between them is the manual approval gate before production — in continuous delivery, the pipeline pauses there until a human approves.

The deployment pipeline supports manual approval for production through a GitHub Actions deployment environment. Manual approval can be a useful starting point, especially while building confidence in the pipeline.

Toward continuous deployment

It's worth periodically asking what role the approval gate actually serves: if most approvals are rubber-stamps, the pipeline is doing the real work and the gate is just adding delivery delay. Even if you don't plan to remove the gate, asking what would have to be true to remove it is a productive exercise - the answer usually points at concrete quality (better tests, observability, ...) or process improvements you can make.